by Maritte O’Gallagher

From block copolymers used in next-generation chips to nanocrystals that self-assemble into superlattices for energy storage applications, scientists use x-ray scattering Beamline 7.3.3 at the Advanced Light Source (ALS) to study diverse materials under conditions that mimic their real-world applications. Researchers might expose samples to open flames inside 7.3.3’s hutch, or test how batteries behave when charging or discharging. 7.3.3 boasts the highest volume of publications of any beamline at the ALS, consistently attracting users with a broad range of research interests.

It’s complicated to build anything for a beamline that hosts such a variety of science, but a newly formed interdisciplinary team is taking on the challenge, integrating robotics and artificial intelligence (AI) to develop a self-driving workflow. The initiative is part of the RADIUS (Rapid Deployment of Instrumentation for User Science) Robotics project, a strategic push to leverage automation and AI for enhancing beamline science. The group’s recent implementation of a robotic measurement system at 7.3.3 sets the stage for AI-based adaptive decision making to supercharge discovery and optimization of numerous high-impact functional materials.

The new robotic platform automates grazing-incidence wide-angle x-ray scattering (GIWAXS), a technique for characterizing the atomic and molecular structure of thin-film nanostructured materials. GIWAXS offers precise insights that help researchers build better materials, including components of flexible displays, specialized sensors, and drug delivery systems. Now, AI and automation are poised to help GIWAXS users make better use of their limited beamtime, translating into discoveries that address pressing global challenges.

This isn’t the first ALS beamline to add robots to the mix, or even the first robot that scientists have implemented at 7.3.3. But the RADIUS Robotics project has a fresh outlook, an expanded scope, and an accelerated timeline.

“This time we’re thinking bigger, building toward a more integrated system,” said Dula Parkinson, photon science operations manager at the ALS and project lead for RADIUS. “We wanted to learn from what others have done incorporating robotics into a beamline workflow and be thoughtful about how it all fits together, with the goal of building something we use on a regular basis going forward.”

In the project’s first year, the RADIUS team has built a new GIWAXS system architecture that supports a robotic arm moving samples moving samples in and out of the helium-purged sample chamber, which is designed to to reduce background effects from air scattering and keep air-sensitive samples stable during measurements. The beamline can now process a higher volume of samples—not only faster, but also more efficiently, with fewer human error-related deviations and a more streamlined use of resources. Best of all, data collection can now continue unattended once the experiment is set up.

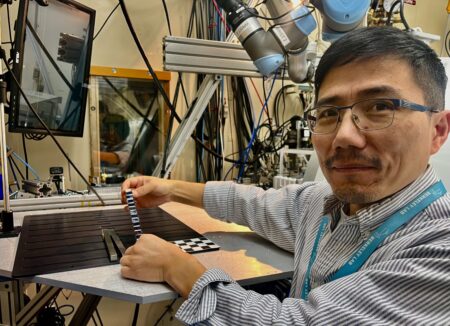

Previously, it took several hours to measure a limited number of samples, and someone needed to stay at the beamline to swap in each set. Now, hundreds of samples can be stored on a tray table and programmed to be measured automatically. All the user or beamline scientist has to do is enter sample and measurement information into a web portal that generates QR codes for each set of samples, and initiate a programmed sequence. At the beamline, the samples will be identified by scanning the QR codes prior to data acquisition. A robotic arm then picks up one set of samples, scans the attached QR code, and places the set of samples into the beamline’s measurement chamber. The system carries out alignment protocols and guides data collection with high precision. Once the measurement process is complete, the robotic arm returns the samples to the tray and autonomously moves on to the next set. A unified software control interface coordinates the whole sequence, complete with a web portal that allows users to track samples and experimental data.

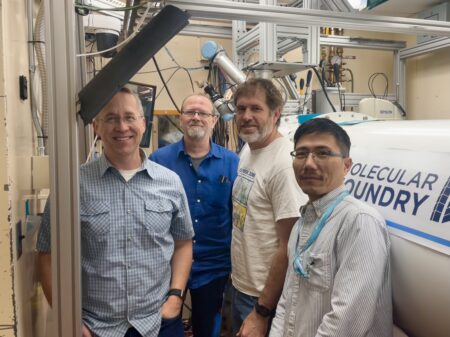

In their first year, the RADIUS robotics project team has implemented a robotics system that automates sample collection and metadata management at Beamline 7.3.3. This clip shows the UR5e robotic arm moving samples into the helium-purged measurement chamber. (Credit: Chenhui Zhu/Berkeley Lab)

Developing the robotic control software, setting up a centralized database for tracking samples, and building the front-end web portal involved cohesive forethought and collaboration.

“The biggest challenge of this project has been the many different components we needed to put together, and how many different kinds of expertise we needed to have the whole system work,” said Parkinson. To achieve such a specialized and comprehensive workflow, the RADIUS team drew on the skills of over 20 ALS professionals, including engineers, beamline controls developers, computational experts, and scientists.

Chenhui Zhu, a staff scientist at Beamline 7.3.3, has provided much of the scientific guidance for the RADIUS Robotics project. In a typical week of supporting user research, Zhu is accustomed to swapping out the sample holder at 7.3.3 from one that holds liquids or powders to a platform for a specialized polymer, or tweaking the settings in the vacuum chamber to study a material under specific pressure conditions. His intimate understanding of the research at 7.3.3 was instrumental in creating a robotic workflow compatible with user research needs, and will continue to be critical as the team expands the platform to support additional sample types and environmental conditions. While integrating the ability to handle air- or temperature-sensitive samples and real-time data analysis into the automated workflow will take hard work, Zhu understands which capabilities will make the biggest impact, and believes it will be worth it.

“There’s a lot of overhead in training new students and users on beamline data collection and analysis,” said Zhu. “Robots and computer algorithms are helping make that process five to ten times more efficient.”

Beyond robot baristas

Despite the perks of their newly automated GIWAXS workflow, the team still feels that what they’ve achieved so far is closer to a robot barista than a self-driving car. A robot barista’s arm will make an espresso, as directed. But self-driving is more complicated: a user picks a destination, and the algorithm figures out how to get there. There’s many more details to resolve, and the same is true when leveraging AI and robotics to make the process of materials discovery and optimization truly self-driving.

“We’re not just trying to measure things faster, we’re trying to measure things more intelligently,” said Zhu.

The robotic GIWAXS platform is Phase I of the project, providing the crucial technological foundation for future AI-guided materials discovery workflows. Development is already underway for Phase II, which will develop a real-time, AI-driven data analysis pipeline and add a robot that can fabricate thin-film samples through the sequential steps of solution mixing, spin coating, and thermal annealing. Together, these capabilities will allow the system to dynamically respond during data collection and direct the robot to produce new sample formulations optimized for various scientifically relevant targets.

For example, the system could vary the blending and swelling ratios in block copolymer blends to optimize size, spacing, and ordering attributes—key factors for minimizing feature size in future chip manufacturing. In other words, integrating this platform into the measurement workflow would enable autonomous, closed-loop experimentation in which thin films are fabricated directly at the beamline and characterized in real time based on ongoing experimental results—achieving the team’s long-term goal of truly self-driving materials discovery and optimization. The speed, precision, and iterative power of such a framework could yield practical results in accelerating development of technologies like advanced water filters and high-performance electric vehicle batteries.

We’re not just trying to measure things faster, we’re trying to measure things more intelligently.

While this first system would be specialized for thin films, the team plans to expand the process to work for other sample types. They’ve consulted their user community to guarantee alignment with regularly used sample formats. To make their contribution to nanomaterials research competitive with more specialized facilities, the group has collaborated with experts at Berkeley Lab’s Molecular Foundry, a nanoscale science user research facility. By sourcing broad input throughout the design process, the team aims to create a workflow that will be transferable to other beamlines and future beamline generations.

Their progress will dovetail with upcoming improvements included in the ALS Upgrade. The SAXS/WAXS beamline will be relocated from Beamline 7.3.3 to a new beamline to be built at 4.3.1, which will provide a major boost in photon power. These upgrades are slated to significantly enhance experimental efficiency and measurement speed, resulting in a higher volume of data than ever before. The RADIUS team intends to utilize computing resources at the National Energy Research Scientific Computing Center (NERSC) to develop data storage and processing solutions for the incoming increase in data generation.

All eyes on AI

As it stands, the completion of Phase I has successfully integrated sample handling, identification, and metadata management into an automated workflow for Beamline 7.3.3, resulting in significantly enhanced efficiency, traceability, and reproducibility. It could also serve as a model for other beamlines and synchrotron facilities. But, the RADIUS Robotics team is feeling the squeeze to complete Phase II.

“People are excited about self-driving beamlines,” said Zhu. “They’re waiting to see us demonstrate the scientific impact.”

Going forward, the group is up against significant challenges in corralling a multitude of moving parts, but they are in it for the long haul. And, they have a few other success stories to draw inspiration from.

“Protein crystallography beamlines are a great example of a dedicated effort to get robust robotics integration, and they’ve made it happen,” Parkinson said, citing the structural biology community. “We’re facing more complexities in making a robot that’s useful across many applications, but we’re committed to keep hammering on this until we get it.”

Additional ALS staff and users involved in the RADIUS Robotics project include:

Eric Schaible, Ivan Galikeev, Matthew Roizin-Prior, Garret Birkel, Yunfei Wang (ALS Collaborative Postdoctoral Fellow from University of Southern Mississippi), Wiebke Koepp, Raja Vyshnavi Sriramoju, Harold Barnard, Chinweike Osubor, Camille Molsick-Gibson, Piotr Gach, Sujoy Roy, Xiaodan Gu (University of Southern Mississippi), Dylan McReynolds, Alexander Hexemer, Lucas Kistulentz, and Damon English.

Listen to the RADIUS robotics team discuss their story in this interview with Charlotta Chan: Mission Possible Podcast: Episode 2: OMG, it’s GIWAXS!